- Blog

- Kurzweil pc2x craigslist

- Gand faadu jokes

- Skymaxx pro 4 horizon

- Emulador de playstation 1 gratis

- Northern california accel fuel injection tuning

- Purchase quickbooks 2013

- Denix sten mk ii

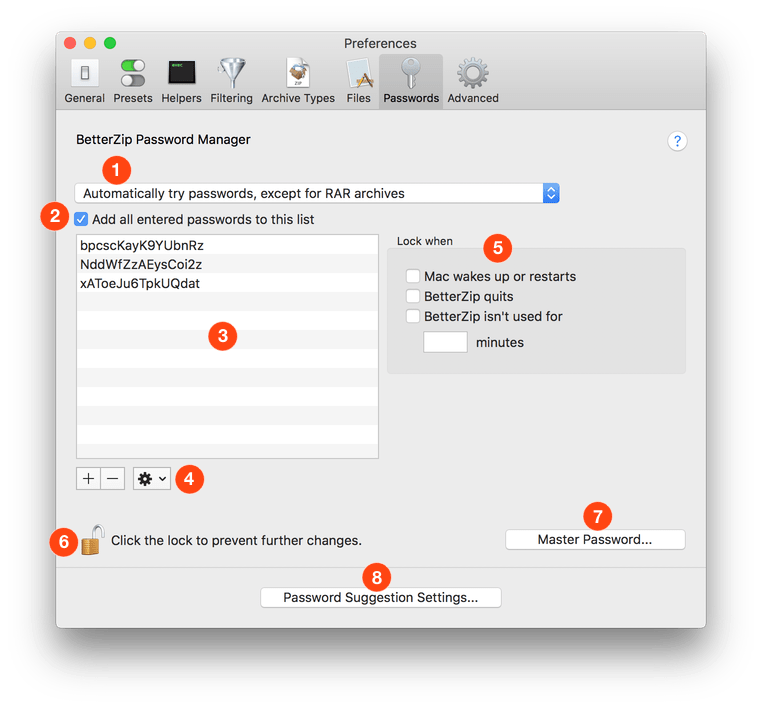

- Betterzip security

- M audio profire 610 driver windows 10

- Acrobat reader pro dc full version free download

- Pokemon indigo league game download full

- Mejores editores multipista para windos full link utorrent

- Malayalam latest movies 2019 download

- Trainz simulator 12 tutorials

> If you take responsibility for streaming the records to disk (trivial), then you can check the canonical path before writing, and any other filesystem sanity tests you want to do. My point is that the compressed bytes have to be decompressed and checksummed in both extraction and checking, but after that the bytes may either be written or discarded. > many or most libraries can give you this as a stream, so it never has to hit disk or be “sent to dev/null”. > The file format is decompressed one record at a timeīut not necessarily in the order they appear.

#BETTERZIP SECURITY ARCHIVE#

> Zip format can be de/compressed progressively, which is one reason why it’s nice for HTTP transport encoding.ĭo you mean HTTP transfer encoding? If so then it's not the zip archive format that's used, but rather the deflate compression algorithm (which zip also uses.) I'm not an expert, but I know enough to write a working zip reader/writer. Last year I implemented zip reading and zip writing in a hobby project of mine. Scanning could lead to false positives, as the format does not forbid other data to be between chunks, nor file data streams from containing such signatures. They must not scan for entries from the top of the ZIP file, because (as previously mentioned in this section) only the central directory specifies where a file chunk starts and that it has not been deleted. > Tools that correctly read ZIP archives must scan for the end of central directory record signature, and then, as appropriate, the other, indicated, central directory records. (Though I suppose a streaming decompressor could decompress to a temporary location and then only move non-deleted entries into their final place.) it can be overwritten by new data, and then re-appended.) I think this approach allows tools to also "delete" data by simply removing the entry from the central directory, and re-appending it w/o, so the central directory is essentially the authoritative source for the ZIPs contents. It would still be huge, given the other tricks in the article.)Īlso, I think you can "append" to ZIPs (this is why the central directory is at the end, s.t. (In the streaming case, I think you'd see exactly 1 file. If you're streaming, you're using the local file headers, but for ZIPs such as those in the article, you will see many less LHFs than had you looked them up in the central directory, b/c they overlap. But this is only apparent if you're using the central directory, which you can't if you're streaming, since it appears after all the data.

The core idea of the article is overlapping the various files within the ZIP, s.t. But the types of ZIPs in the article are going to act differently if you tried streaming them. For a normal, created in one-shot ZIP, yes. From what I could see, there were no false positives for rejecting gaps between referenced local files on a small sample of 5000 archives.

#BETTERZIP SECURITY SOFTWARE#

The goal of is to scan email attachments at the gateway and reject zip archives that might prove dangerous to more vulnerable zip software running downstream (MS Office, macOS) etc.Īlso, as you say, I don't think incrementally updated archives are used much.

Of course, the spec advocates parsing backwards according to the central directory record, but implementations exist that don't do this. These "invisible" dead zones can be used for malware stuffing, or to exploit ambiguity across different zip implementations, those that parse forwards (using the local file header as the canonical header) and those that parse backwards (using the central directory header as the canonical header).įor example, a malware author might put an EXE in the first version of a file, and a TXT in the second version of that same file. For a rarely used feature, it's a dangerous feature. It was a feature back in the day, but as you say it also leaves dead zones in the archive. Yes, I made a decision to reject incrementally updated archives.

- Blog

- Kurzweil pc2x craigslist

- Gand faadu jokes

- Skymaxx pro 4 horizon

- Emulador de playstation 1 gratis

- Northern california accel fuel injection tuning

- Purchase quickbooks 2013

- Denix sten mk ii

- Betterzip security

- M audio profire 610 driver windows 10

- Acrobat reader pro dc full version free download

- Pokemon indigo league game download full

- Mejores editores multipista para windos full link utorrent

- Malayalam latest movies 2019 download

- Trainz simulator 12 tutorials